Average Ratings 0 Ratings

Average Ratings 0 Ratings

Description

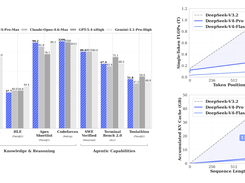

DeepSeek-V4-Pro is an advanced Mixture-of-Experts language model built for high-performance reasoning, coding, and large-scale AI applications. With 1.6 trillion total parameters and 49 billion activated parameters, it delivers strong capabilities while maintaining computational efficiency. The model supports a massive context window of up to one million tokens, making it ideal for handling long documents and complex workflows. Its hybrid attention architecture improves efficiency by reducing computational overhead while maintaining accuracy. Trained on more than 32 trillion tokens, DeepSeek-V4-Pro demonstrates strong performance across knowledge, reasoning, and coding benchmarks. It includes advanced training techniques such as improved optimization and enhanced signal propagation for better stability. The model offers multiple reasoning modes, allowing users to choose between faster responses or deeper analytical thinking. It is designed to support agentic workflows and complex multi-step problem solving. As an open-source model, it provides flexibility for developers and organizations to customize and deploy at scale. Overall, DeepSeek-V4-Pro delivers a balance of performance, efficiency, and scalability for demanding AI applications.

Description

Phi-4-reasoning-plus is an advanced reasoning model with 14 billion parameters, enhancing the capabilities of the original Phi-4-reasoning. It employs reinforcement learning for better inference efficiency, processing 1.5 times the number of tokens compared to its predecessor, which results in improved accuracy. Remarkably, this model performs better than both OpenAI's o1-mini and DeepSeek-R1 across various benchmarks, including challenging tasks in mathematical reasoning and advanced scientific inquiries. Notably, it even outperforms the larger DeepSeek-R1, which boasts 671 billion parameters, on the prestigious AIME 2025 assessment, a qualifier for the USA Math Olympiad. Furthermore, Phi-4-reasoning-plus is accessible on platforms like Azure AI Foundry and HuggingFace, making it easier for developers and researchers to leverage its capabilities. Its innovative design positions it as a top contender in the realm of reasoning models.

API Access

Has API

API Access

Has API

Integrations

Buda

DeepSeek

Hugging Face

Microsoft Azure

Microsoft Foundry

MoClaw

OpenClaw

Together AI

Vercel AI Gateway

ZooClaw

Integrations

Buda

DeepSeek

Hugging Face

Microsoft Azure

Microsoft Foundry

MoClaw

OpenClaw

Together AI

Vercel AI Gateway

ZooClaw

Pricing Details

Free

Free Trial

Free Version

Pricing Details

No price information available.

Free Trial

Free Version

Deployment

Web-Based

On-Premises

iPhone App

iPad App

Android App

Windows

Mac

Linux

Chromebook

Deployment

Web-Based

On-Premises

iPhone App

iPad App

Android App

Windows

Mac

Linux

Chromebook

Customer Support

Business Hours

Live Rep (24/7)

Online Support

Customer Support

Business Hours

Live Rep (24/7)

Online Support

Types of Training

Training Docs

Webinars

Live Training (Online)

In Person

Types of Training

Training Docs

Webinars

Live Training (Online)

In Person

Vendor Details

Company Name

DeepSeek

Founded

2023

Country

China

Website

deepseek.com

Vendor Details

Company Name

Microsoft

Founded

1975

Country

United States

Website

azure.microsoft.com/en-us/blog/one-year-of-phi-small-language-models-making-big-leaps-in-ai/